DIH4AI: X-MUC-2_Platform-as-a-service for accountable evidential transactions

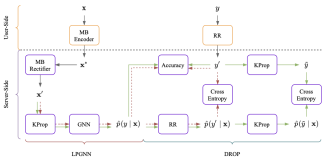

In the domains of aeronautics, automotive, energy, manufacturing and retail, Munich Innovation Hub for Applied AI proposes novel solutions to counter the complexity and dependability challenges resulting from distributed accountability, the need for more ...